14th April 2026

In 2026, individual artificial intelligence tool adoption across the humanitarian ecosystem continues to outpace overall organisational readiness. What are the implications of this sharpening trend?

No single actor can shift the humanitarian AI landscape alone. It takes a movement, built from contributions big and small – including yours.

Navigating sectoral challenges and rapid technological change

As we are all too familiar, 2026 continues to be characterised by profound challenge for everyone in the humanitarian space – felt most acutely by those in crisis-affected contexts. Alongside this, AI developments continue to accelerate, adding to the sense of ‘noise’ and confusion across the sector.

To play our part in providing data and evidence and to promote dialogue on how AI is bearing out across the humanitarian system at large, in May 2025, we partnered with Data Friendly Space (DFS) to lead the first comprehensive global study into how humanitarians are using AI, reaching more than 2,500 respondents from 144 countries. As my research co-lead Madigan Johnson put it at the time: “we had tapped into a massive underground conversation”, signalling the huge demand from humanitarians for insights and guidance on how to navigate AI in their work. At the end of the foundational phase in November, I wrote a reflection piece on the research, outputs and sector engagement.

Our approach in 2026: from AI mapping to convening and collective action

In response to this sector demand, and as part of HLA’s broader convening strategy and commitment to local leadership, throughout the first quarter of 2026 we led the second phase of this work. We once again teamed up with DFS to focus on rapid data collection, community engagement and mobilisation through surveys and convening using digital platforms. Together with partners and contributors, we:

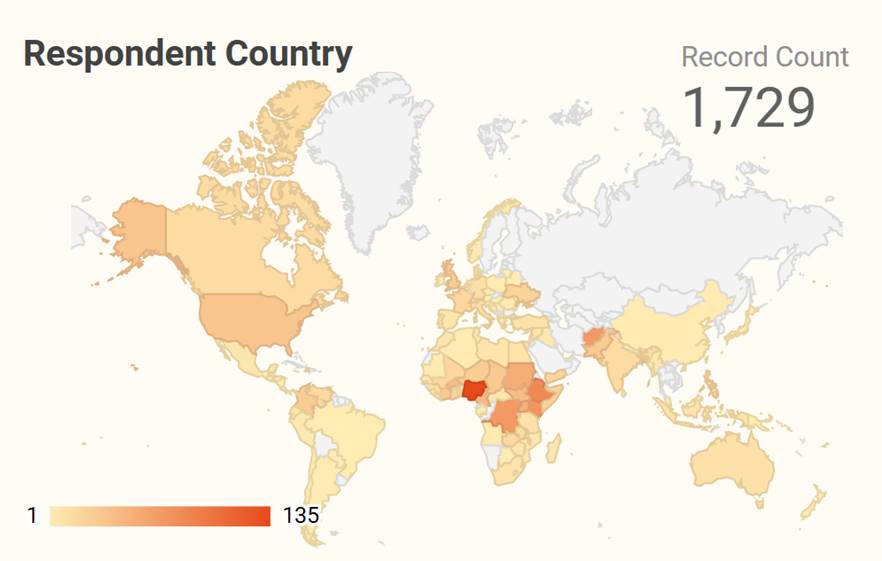

- Launched the January 2026 pulse survey to track if and how adoption patterns and attitudes have shifted, reaching more than 1,700 respondents from 120+ countries

- Led three global online sessions in January to March including with NetHope and at Humanitarian Networks and Partnerships Weeks (HNPW) to engage the community, and to disseminate and discuss findings, generating a combined 1,700 registrations.

- Released a research briefing note in March in English, French and Spanish to support leadership teams alongside an updated data dashboard.

From the two survey waves 2025-26 with DFS and supporting engagement campaigns with the wider community, we now have data from 4,200 survey responses, attracting more than 2,700 individuals to learn more through online sessions, as well as thousands more through events, podcasts, social media engagement, and sector media including Devex.

January 2026 pulse survey: what the data is telling us

The crises and upheaval of 2025 appear to have deepened the paradox rather than resolved it: rising individual conviction set against largely static organisational readiness.

- 75% of humanitarians now use AI daily or weekly (+5% since 2025)

- 65% say AI has improved operational efficiency (+18%)

- 54% feel AI has supported better decision-making (+16%)

- Yet only 9% work in organisations where AI is widely integrated (+1%)

- Only 23% have a formal AI policy (+1%)

- Only 3% consider themselves expert AI users – essentially unchanged

- 30% are now using custom-built AI agents – a term that did not appear in our 2025 survey (only in interviews with research participants).

Heat map of survey response locations from the January 2026 pulse survey. Over 80% of respondents are from the Global South/Majority – an even stronger representation than the 2025 baseline study (75%).

While the full picture is available in our research briefing note and dashboard, a few findings are worth highlighting here. Notably, AI adoption in the humanitarian ecosystem is not following a Global North-to-South diffusion pattern – the highest growth and most intensive daily usage are concentrated in regions with acute humanitarian needs, including Kenya, Sudan and Bangladesh. Looking at usage alongside organisational governance, we can see that while local organisations are the highest daily users of AI, only 13% have a formal AI policy, compared to 39% in UN agencies. This indicates that the governance gap falls hardest on those already working with less resource.

2026 is bringing governance into sharp focus

At the time of writing my personal reflection at the end of the first phase of research in November 2025, I did not see a clear consensus emerging on sectoral priorities for action and clear pathways forward. Five months on, I see AI literacy and governance crystallising as critical priorities – conversations, data and sectoral movements from this phase point to an increased convergence around these as foundational challenges.

With the rapid diffusion of AI across the ecosystem at large – driven by accessible LLMs and now agentic AI – and limited movement on organisational AI governance, right now in 2026, I believe this evidence points to humanitarian AI as a governance and protection challenge, rather than primarily as an innovation agenda.

The 2026 AI Index Report just released by the Stanford Institute for Human-Centered Artificial Intelligence (HAI) provides data evidencing that responsible AI is not keeping pace with AI capability: “Documented AI incidents rose to 362 [in 2025], up from 233 in 2024. Adding to the challenge, recent research found that improving one responsible AI dimension, such as safety, can degrade another, such as accuracy.” This evidence underscores the urgency of the governance and accountability piece.

What are the sentiments across the sector? The conversation is maturing: cautious optimism with an eye on ethical risks

Across our three 2026 webinars, sentiments and lines of questioning have noticeably shifted since the August 2025 report launch. The question is shifting from “should we use AI?” to “how can we use it – responsibly?”

Speakers at our February 2026 webinar: Beyond the hype: Ground truth on AI across the humanitarian sector held in partnership with Data Friendly Space

In 2026, humanitarians are keen to hear more about specific use cases and to learn from each other – and the discussions tend to be more focused and grounded in the reality of current capabilities and context rather than more future-facing aspirational large-scale deployments.

In webinars, attendees raise specific questions that demonstrate close engagement on the discourse including the rise of AI agents, small language models for low-connectivity settings, climate impact, data sovereignty, and local leadership.

It has been encouraging to see AI conversations becoming more mainstream among practitioners alongside what appears to be increased confidence and sense of psychological safety around these conversations, including on LinkedIn.

Looking at the survey comments, the tone can be characterised on balance as cautiously optimistic – those who point out the benefits now and in the future usually add caveats and concerns too.

A senior leader in operations at a local NGO in Somalia said:

“AI has strong potential to improve humanitarian work by supporting data collection, faster decision-making, and better targeting of assistance. However, more training and access are needed to ensure effective and responsible use, especially at local level.”

As one programme manager at a local NGO in the Philippines said:

“Since our work deals with people with complex situations, I think what AI can help in our organisation is that it can help us analyse scientifically, but it cannot replace human interface. So ultimately, we will adopt with caution.”

The human dimension and judgement are often grappled with and expressed, highlighting a tension in the use of AI in humanitarian work. I’ve noticed that the word ‘lazy‘ surfaces quite frequently in the comments and conversations, particularly respondents from Africa – from Sudan to Nigeria.

An operations manager from Nigeria, a non-adopter, said:

“AI training should not be done in a manner that will make people become lazy.”

Environmental concerns also came through. A programme team lead at a US-based INGO wrote:

“In our sector we need to address the environmental impact and exacerbated digital divide that AI is escalating.”

Another operations manager noted they use AI “only begrudgingly” given concerns about water and energy consumption.

These are considered, values-led positions that point to something important that I personally view as overlooked: near-universal individual uptake of AI tools driven by LLMs does not mean universal organisational adoption is inevitable, or required in every context. There must remain the right to say no.

An overlooked perspective? Organisational AI adoption and the case for intentionality over inevitability

To me, what the discourse often underexplores is a significant proportion of the sector who have not embarked upon AI adoption – including those who do not intend to. 22% of January survey respondents said their organisation has not yet started AI adoption but intends to, and a further 10% have no intention of adopting AI at all.

28% of survey respondents said that their organisation is currently in the AI experimentation and piloting phase. Organisations may stay in that phase for reasons of resource, governance, deliberate caution, or context.

In my view, the humanitarian AI paradox does not represent a gap to be closed in a linear way, rather it is a space to be navigated and led according to each actor in each context – with purpose and intentionality.

Yet, a conscious non-adoption decision for an organisation does not mean no action is required in this space. Individual usage will continue regardless, creating a vacuum without governance or guardrails.

In our January webinar in partnership with NetHope, Daniela Weber made the case for action on organisational policies as a critical step:

“Everyone in your staff that uses a device will come into contact with AI, either by choice, or because the tools you’re using have AI built in. So having that policy is important.”

What this points to is an informed approach to AI at the individual level and leadership at the institutional level. As Timi Olagunju articulated, this moment calls for “digital leadership”. And in our January webinar with NetHope, Mercyleen Tanui captured the organisational challenge: “AI is not an IT initiative. It is an organisational change initiative.”

Governance is emerging as a priority: from frameworks to operationalisation and accountability

At our January webinar, Esther Grieder from NetHope offered some predictions for 2026: that this could be the year of one significant AI-related error or harmful use case that forces the sector to act on standards, and that AI agents may begin appearing in organograms. These are observations from conversations, not data – but they are a stark reminder of what is at stake if governance continues to lag.

During the same session, Michael Tjalve noted that in his recent experience working across the sector, he had not seen much meaningful movement on AI policy development – and our survey data released shortly after bore that out: just a 1% increase in formal organisational AI policies between the two surveys (to 23%).

In our February convening to discuss the findings, Timi Olagunju highlighted this governance gap as a particular concern for the sector:

“The fact that AI policy is at a slow pace compared to the growth in use within the humanitarian sector is concerning. Governance frameworks provide the context in which AI can truly serve the public good.”

Liz Devine also called for shared standards specifically for humanitarians, and in doing so reframes governance as an enabler:

“One of the things the sector really needs to figure out is developing a set of minimum standards for really sensitive areas like protection and gender-based violence…we need a set of core minimum standards that organisations agree on.”

This lack of shared approaches and standards, she argued, is holding organisations back:

“I actually think that’s one of the reasons we’re seeing slower organisational adoption. Organisations are grappling with this level of risk. We don’t want to put out solutions we can’t back up with the ethical safeguards needed.”

Nayid Orozco Bohorquez reinforces this view of governance as enabler rather than inhibitor of innovation:

“Clear policies don’t stop innovation – they give people the confidence to start using AI in a responsible and open way.”

As Mercyleen Tanui advocates for with AI tools: “Right-size the tool so that you are not starting bigger by default.”

I think we can arguably apply this principle more broadly: considering right-sized governance frameworks, tools and audit requirements for each organisation and context to shape governance as an enabler, and not a burden.

Across diverse actors and contexts, there is a growing convergence: governance is a collective priority, and the direction of travel is encouraging and critical work lies ahead. As a newly-released report from NetHope puts it: “responsible AI governance is emerging but structurally fragmented” and “without shared standards to bridge this gap, responsible AI practices will continue to vary widely across organizations.”

Funders and donors have a crucial role to play. Analysis just published by Candid highlights the scale of the disconnect: 84% of nonprofits need funding to develop and scale AI tools, yet only 17% say their funders have engaged them on AI.

In a period of hyper-prioritisation, investing in AI governance may not represent the most urgent ask. Yet, when we view the humanitarian AI landscape through a protection lens, a case emerges that this is the moment when that investment is needed – to protect sensitive data, safeguard vulnerable populations, and mitigate risk at a time of acute shocks and vulnerabilities across the sector. What happens next – how emerging governance frameworks are operationalised across different contexts is critical, including the involvement and focus on smaller and local actors.

Centre local actors: harnessing contextual knowledge and innovation

In our research in both 2025 and 2026, what really emerged was the creative and resourceful applications and approaches of local actors in the Global South, which is a finding also documented by Daniela Weber in a NetHope article where she has found through her extensive work in this space that “the Global South leads innovation.” She believes this year more AI use cases and tools will be developed locally in the Global South, particularly specialised language models and domain-specific solutions tailored to regional needs.

In 2024, local and national actors participated in 93% of Humanitarian Country Teams (HCTs) globally (GHO Report 2025). By that logic, humanitarian AI by its very definition should meet the specific needs of these local and national actors. Their experience should be shaping the agenda in line with broader localisation processes to shift power to local actors.

Active work must be done in the AI space to counter the risk that ‘humanitarian AI’ becomes shorthand for large-scale technical deployments by well-resourced international actors, particularly when the current adoption patterns are globally distributed and bottom-up and often without governance or organisational support. This is a pivotal moment for action to counter and not perpetuate digital divides.

In our HNPW session focused on local leadership in AI development in March, Musaab Abdalhadi set the frame:

“When we talk about local leadership in humanitarian AI, we often focus on access to technology, but from my perspective, the real issue is power, not technology.”

Speakers at our March 2026 HNPW session: Bridging digital divides: centring Local leadership in humanitarian AI development

Musaab’s words carry weight because they are grounded in lived experience and action. In November 2025, he initiated what we believe was one of the first AI training sessions designed specifically for Sudan’s crisis context – delivered fully in Arabic, in partnership with Ali Al Mokdad and the HLA. The 28 participants – drawn from Emergency Response Rooms, youth-led volunteer groups and local NGOs – were already using AI informally and without guidance.

As a training participant reflected:

“Since the beginning of the war, we have relied on artificial intelligence to meet donor requirements – this training helped me use these tools more effectively and confidently.”

Local responders in Sudan participating in remote-delivered humanitarian AI training in November 2025. Image credit: Save the Children in Sudan

The infrastructure reality behind many of these conversations represents significant barriers. In the January survey, a senior leader in data and information management at a local organisation in Cameroon, responding in French, described the context of low internet penetration, limited digital literacy, and rural areas where electricity is still a luxury across much of Sub-Saharan Africa. And yet, he wrote, in a world of constant change, there is a duty to adapt and level up – not for its own sake, but to improve the daily lives of the populations who are suffering.

Agency of local actors and community-centred approaches is central to this. As Ali Al Mokdad said:

“Let’s not overestimate the risks and underestimate the opportunities. Local organisations in Nigeria, Lebanon, Syria, Sudan, Kenya, Rwanda are giving us very good examples of how to leverage these tools. The main important thing is not to stand in the way of local organisations and local leaders.”

In our February webinar, Rebecca Chandiru illustrated what community-embedded AI looks like in practice:

“Once the local community is involved in building and testing the models, they become very confident and they trust that data.”

Gülsüm Özkaya, whose research focuses on AI-generated visuals from the perspective of crisis-affected people, offered a reframe that cuts through much of the international versus local debate – which may be a false dichotomy, particularly when viewing the AI landscape.

“The main divide right now is not about being global or local. It’s about being digitally fluent and AI-aware. A local organisation that masters the use of AI tools can access the opportunities and create impact as effectively as the global giants.”

Shortly before HNPW in March, I published an interview with Ivan Toga – a pulse survey respondent joining the call from Rhino Refugee Camp in northern Uganda. As Ivan summarises:

“We need an artificial intelligence that speaks the language of the donor and the language of the village where I come from. We need an AI that is good for all of us.”

Looking ahead: collective action and local leadership

We have focused efforts in these two phases of this research measuring and communicating the paradox and its implications. The next phase is about responding to it – connecting and convening humanitarian actors, and supporting collective action to find solutions in small and big ways.

To promote AI literacy and skilling, we are currently exploring microlearning guides and bite-sized content. As a direct follow-up to our January webinar with NetHope, we published a practical quick-start guide on organisational AI readiness. It is also encouraging to see new AI courses emerging across the ecosystem, including through NetHope on Kaya, a new free humanitarian AI course from Elrha, as well as nonprofit AI learning initiatives from Microsoft. These represent encouraging movement toward contextualised learning for nonprofits and humanitarians.

In the governance arena, the first instalment of the UK FCDO-funded SAFE AI Framework is scheduled for release in May 2026, with a vision to establish “the nature and scale of the humanitarian AI governance gap, why it matters and why individual agency policies cannot close it alone.” This stands alongside increasing sectoral discourse and outputs focused on governance, responsible AI and accountability.

2026 remains a critical window. Humanitarian AI will keep growing and we need to move forward with purpose – with informed, intentional, values-led choices before key decisions are made and vendors are locked in. The sector’s choices must align with our overall commitments to shift power toward local actors, and ensure the tools emerging truly serve the people and principles we are here for.

As Musaab Abdalhadi said, local actors should be at the table as co-designers, not testers. As Ivan Toga advocates, we need models built from community, not delivered to it. And as our research keeps showing us – the energy, the ingenuity, and the will are already there.

Ali Al Mokdad encapsulates the opportunities, challenges and what is at stake:

“AI tools and AI in general could be either the best or the worst thing that could ever happen to humanity and to what we do. And localising AI could take us to the best-case scenario.”

Coordinated efforts together with donors and funders and other influential actors are pivotal in the next stages to operationalise and embed sector efforts. The risk should not be placed on individuals or local actors but those who are mandated and resourced to bear this responsibility. No single actor can shift this alone. It takes a movement, built from contributions big and small – including yours.

About the author

Ka Man Parkinson is Communications Lead at the Humanitarian Leadership Academy, where she leads on global engagement and community building initiatives as part of the organisation’s convening strategy. Ka Man blends multimedia campaigns with learning and research – she produces and hosts the Fresh Humanitarian Perspectives podcast and HLA Webinar Series, building a culture of thought leadership. Her interdisciplinary background – spanning two decades of communications and marketing experience in the international education and nonprofit sectors, and an academic grounding in business management and IT – shapes her holistic and people-centred approach to her work. She initiated and co-leads the first global study to track how humanitarians are using AI in their work. Ka Man is based near Manchester, UK.

Acknowledgements

This research and convening initiative is a collective effort that would not be possible without input, engagement and support from across the sector. The author would like to thank all research participants who generously shared their experiences and insights; research co-leads Lucy Hall (HLA) and Madigan Johnson (Data Friendly Space); January webinar panellists Esther Grieder (NetHope), Mercyleen Tanui (WaterAid), Michael Tjalve (Humanitarian AI Advisory/Roots AI Foundation), and Daniela Weber (NetHope); February webinar panellists Rebecca Chandiru (Humanitarian OpenStreetMap Team), Liz Devine (GOAL Global), Timi Olagunju (The Timeless Practice), and Nayid Orozco Bohorquez (now Mercy Corps); HNPW session panellists Musaab Abdalhadi (Save the Children in Sudan), Ali Al Mokdad (independent), and Gülsüm Özkaya (now IHH); and interview guest Ivan Toga (humanitarian practitioner from Uganda).

Note and disclaimer

This article is a personal reflection, prepared to promote learning and dialogue. It is not intended as prescriptive policy advice, or as organisational endorsement of specific individuals, organisations, technologies or approaches. Organisations should conduct their own assessments based on their specific contexts, requirements, and risk tolerances. Quotes from contributors have been drawn from webinars, interviews, and published materials; the views expressed in this article are those of the author and do not necessarily reflect the positions of quoted individuals or their organisations. This research has been conducted independently by the Humanitarian Leadership Academy in partnership with Data Friendly Space and has received no external funding.

About the research

The January 2026 pulse survey was conducted by the Humanitarian Leadership Academy and Data Friendly Space, building on the May/June 2025 foundational study – creating a global baseline of AI adoption across the humanitarian sector. Since the report’s release in August 2025, the research has achieved significant global reach and impact: informing academic research in Türkiye, Colombia, Switzerland, and Germany; supporting an Arabic-language AI training initiative for local responders in Sudan; contributing to civil society advocacy in Ukraine; and shaping high-level and practitioner dialogue on responsible humanitarian AI at international conferences and webinars, as well as through a six-part podcast series with a focus on global and African humanitarian AI perspectives. A combined total of more than 4,200 responses have been received across the two survey waves. All reports and briefing notes are available on the research landing page in English, French and Spanish.